Should we concern an assault of the voice clones?

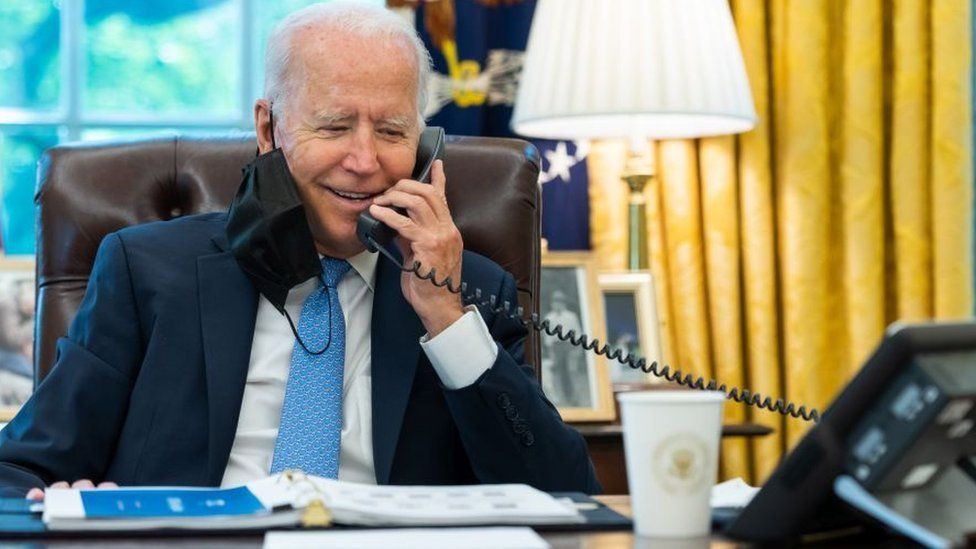

“It’s important that you save your vote for the November election,” the recorded message advised potential voters final month, forward of a New Hampshire Democratic main election. It sounded quite a bit just like the President.

But votes do not should be saved, and the voice was not Joe Biden however seemingly a convincing AI clone.

The incident has turned fears about AI-powered audio-fakery as much as fever pitch – and the expertise is getting extra highly effective, as I discovered after I approached a cybersecurity firm concerning the subject.

We set-up a name, which went like this:

“Hey, Chris, this is Rafe Pilling from Secureworks. I’m returning your call about a potential interview. How’s it going?”

I mentioned it was going effectively.

“Great to hear, Chris,” Mr Pilling mentioned. “I appreciate you reaching out. I understand you are interested in voice-cloning techniques. Is that correct?”

Yes, I replied. I’m involved about malicious makes use of of the expertise.

“Absolutely, Chris. I share your concern. Let’s find time for the interview,” he replied.

But this was not the actual Mr Pilling. It was an illustration laid on by Secureworks of an AI system able to calling me and responding to my reactions. It additionally had a stab at imitating Mr Pilling’s voice.

Listen to the voice-cloned name on the most recent episode of Tech Life on BBC Sounds.

Millions of calls

“I sound a little bit like a drunk Australian, but that was pretty impressive,” the precise Mr Pilling mentioned, because the demonstration ended. It wasn’t utterly convincing. There had been pauses earlier than solutions that may have screamed “robot!” to the cautious.

The calls had been made utilizing a freely accessible industrial platform that claims it has the capability to ship “millions” of cellphone calls per day, utilizing human sounding AI brokers.

In its advertising it suggests potential makes use of embody name centres and surveys.

Mr Pilling’s colleague, Ben Jacob had used the tech for example – not as a result of the agency behind the product is accused of doing something unsuitable. It is not. But to indicate the aptitude of the brand new era of methods. And whereas its sturdy go well with was dialog, not impersonation, one other system Mr Jacob demonstrated produced credible copies of voices, based mostly on solely small snippets of audio pulled from YouTube.

From a safety perspective, Mr Pilling sees the power of methods to deploy hundreds of those sorts of conversational AI’s quickly as a major, worrying growth. Voice cloning is the icing on the cake, he tells me.

Currently cellphone scammers have to rent armies of low-cost labour to run a mini name centre, or simply spend a variety of time on the cellphone themselves. AI may change all that.

If so it might replicate the impression of AI extra typically.

“The key thing we’re seeing with these AI technologies is the ability to improve the efficiency and scale of existing operations,” he says.

Misinformation

With main elections within the UK, US and India due this 12 months, there are additionally issues audio deepfakes – the identify for the sort of refined pretend voices AI can create – may very well be used to generate misinformation geared toward manipulating the democratic outcomes.

Senior British politicians have been topic to audio deepfakes as have politicians in different nations together with Slovakia and Argentina. The National Cyber Security centre has explicitly warned of the threats AI fakes pose to the subsequent UK election.

Lorena Martinez who works for a agency working to counter on-line misinformation, Logically Facts, advised the BBC that not solely had been audio deepfakes changing into extra frequent, they’re additionally more difficult to confirm than AI pictures.

“If someone wants to mask an audio deepfake, they can and there are fewer technology solutions and tools at the disposal of fact-checkers,” she mentioned.

Mr Pilling provides that by the point the pretend is uncovered, it has usually already been extensively circulated.

Ms Martinez, who had a stint at Twitter tackling misinformation, argues that in a 12 months when over half the world’s inhabitants will head to the polls, social media companies should do extra and will strengthen groups preventing disinformation.

She additionally known as on builders of the voice cloning tech to “think about how their tools could be corrupted” earlier than they launch them as an alternative of “reacting to their misuse, which is what we’ve seen with AI chatbots”.

The Electoral Commission, the UK’s election watchdog, advised me that rising makes use of of AI “prompt clear concerns about what voters can and cannot trust in what they see, hear and read at the time of elections”.

It says it has teamed up with different watchdogs to attempt to perceive the alternatives and the challenges of AI.

But Sam Jeffers co-founder of Who Targets Me, which displays political promoting, argues you will need to keep in mind that democratic processes within the UK are fairly sturdy.

He says we should always guard towards the hazard of an excessive amount of cynicism too – that deepfakes lead us to disbelieve respected info.

“We have to be careful to avoid a situation where rather than warning people about dangers of AI, we inadvertently cause people to lose faith in things they can trust,” Mr Jeffries says.

Related Topics

-

-

21 December 2023

![A person with a microphone editing video at home]()

-